Second-Generation NVLink™ The second generation of NVIDIA’s NVLink high-speed interconnect delivers higher bandwidth, more links, and improved scalability for multi-GPU and multi-GPU/CPU system configurations.Finally, a new combined L1 Data Cache and Shared Memory subsystem significantly improves performance while also simplifying programming. Volta’s new independent thread scheduling capability enables finer-grain synchronization and cooperation between parallel threads. With independent, parallel integer and floating point datapaths, the Volta SM is also much more efficient on workloads with a mix of computation and addressing calculations. New Tensor Cores designed specifically for deep learning deliver up to 12x higher peak TFLOPs for training. The new Volta SM is 50% more energy efficient than the previous generation Pascal design, enabling major boosts in FP32 and FP64 performance in the same power envelope. New Streaming Multiprocessor (SM) Architecture Optimized for Deep Learning Volta features a major new redesign of the SM processor architecture that is at the center of the GPU.Key compute features of Tesla V100 include the following. Right: Given a target latency per image of 7ms, Tesla V100 is able to perform inference using the ResNet-50 deep neural network 3.7x faster than Tesla P100.

Figure 2: Left: Tesla V100 trains the ResNet-50 deep neural network 2.4x faster than Tesla P100. Figure 2 shows Tesla V100 performance for deep learning training and inference using the ResNet-50 deep neural network. GV100 is an extremely power-efficient processor, delivering exceptional performance per watt. Further simplifying GPU programming and application porting, GV100 also improves GPU resource utilization. GV100 delivers considerably more compute performance, and adds many new features compared to its predecessor, the Pascal GP100 GPU and its architecture family. It is fabricated on a new TSMC 12 nm FFN high performance manufacturing process customized for NVIDIA.

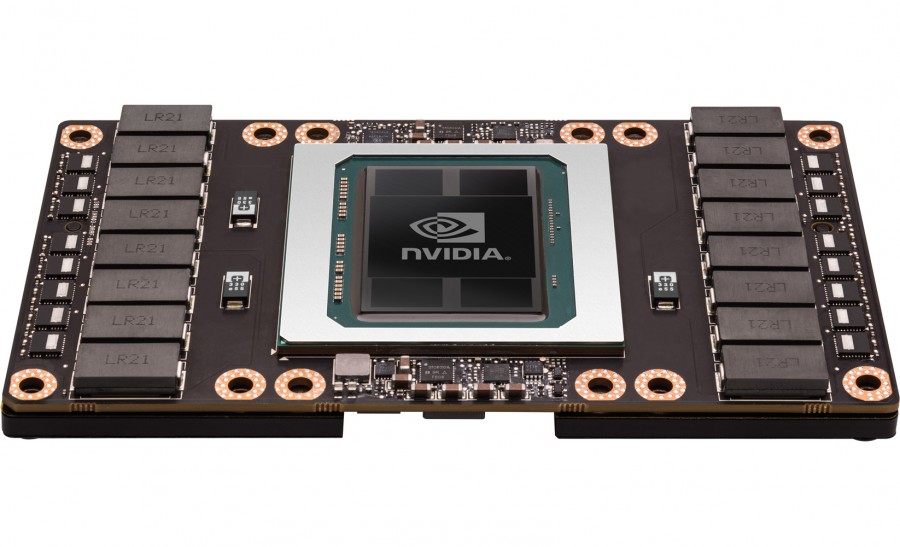

The GV100 GPU includes 21.1 billion transistors with a die size of 815 mm2. The NVIDIA Tesla V100 accelerator is the world’s highest performing parallel processor, designed to power the most computationally intensive HPC, AI, and graphics workloads. Tesla V100: The AI Computing and HPC Powerhouse In this blog post we will provide an overview of the Volta architecture and its benefits to you as a developer. It offers a platform for HPC systems to excel at both computational science for scientific simulation and data science for finding insights in data. AI extends traditional HPC by allowing researchers to analyze large volumes of data for rapid insights where simulation alone cannot fully predict the real world.īased on the new NVIDIA Volta GV100 GPU and powered by ground-breaking technologies, Tesla V100 is engineered for the convergence of HPC and AI. From predicting weather, to discovering drugs, to finding new energy sources, researchers use large computing systems to simulate and predict our world. High Performance Computing (HPC) is a fundamental pillar of modern science. Figure 1: The Tesla V100 Accelerator with Volta GV100 GPU. Solving these kinds of problems requires training exponentially more complex deep learning models in a practical amount of time. Today at the 2017 GPU Technology Conference in San Jose, NVIDIA CEO Jen-Hsun Huang announced the new NVIDIA Tesla V100, the most advanced accelerator ever built.įrom recognizing speech to training virtual personal assistants to converse naturally from detecting lanes on the road to teaching autonomous cars to drive data scientists are taking on increasingly complex challenges with AI.